Researching neuromorphic computers. Curious about abstractions. Cares about #FOSS

ID: 221431142

https://jepedersen.dk 30-11-2010 17:08:04

829 Tweet

512 Followers

448 Following

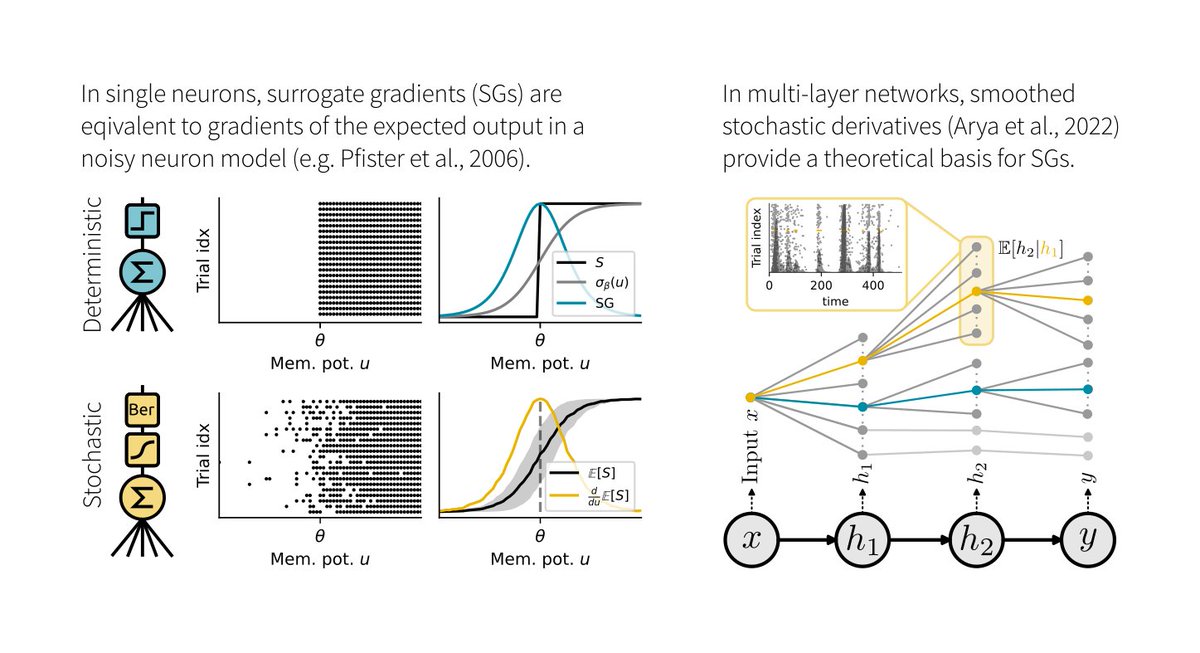

1/6 Surrogate gradients (SGs) are empirically successful at training spiking neural networks (SNNs). But why do they work so well, and what is their theoretical basis? In our new preprint led by Julia Gygax, we provide the answers: arxiv.org/abs/2404.14964

🚨 New paper alert! Have you ever suspected that spikes, Dale's law, and E/I balance might be more than just biological constraints, but rather fundamental to how brains compute? Check out my latest work with Christian Machens ChampalimaudResearch: tinyurl.com/mpwkkubd 🧵 (1/5)

Surya Ganguli Stanislav Fort Kording Lab 🦖 Blake Richards Friedemann Zenke Aran Nayebi This depends on what "biologically plausible" means. I like to read about grad approx that relate to measured / measurable plasiticity mechanisms. Important references, imo: nature.com/articles/s4159… nature.com/articles/s4159… nature.com/articles/s4146… shorturl.at/CB8Cc