Arvind Narayanan

@random_walker

Princeton CS prof. Director @PrincetonCITP. I write about the societal impact of AI, tech ethics, & social media platforms.

BOOK: AI Snake Oil. Views mine.

ID:10834752

https://www.cs.princeton.edu/~arvindn/ 04-12-2007 11:14:14

12,0K Tweets

118,9K Followers

410 Following

Follow People

thanks Arvind Narayanan and Matthew Salganik for the support!

truly excited to be starting my cs phd at princeton this fall 🥳

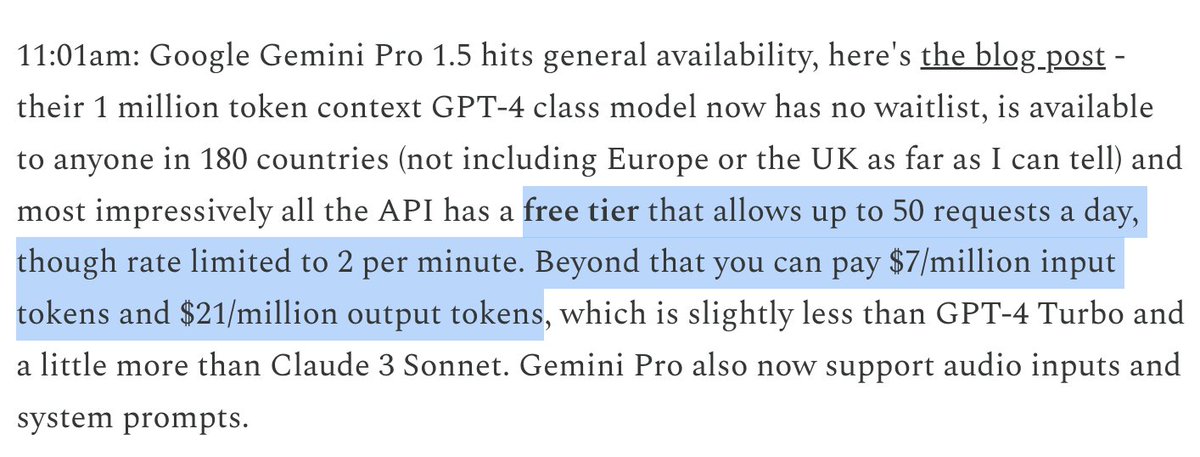

This is the craziest example of the ongoing AI frenzy I've ever seen. As far as I can tell this means that a single developer can make queries that burn $1,400 worth of compute for free *per day* (e.g. 'reverse the following 1M token string') — 50 * (7+21) = 1,400.

HT Simon Willison

I'm ecstatic to share that preorders are now open for the AI Snake Oil book! The book will be released on September 24, 2024.

Arvind Narayanan and I have been working on this for the past two years, and we can't wait to share it with the world.

Preorder: princeton.press/awecv19z

Do Community Notes reduce the spread of false information? Short answer: Yes. Longer answer: Yes, but... A 🧵 arxiv.org/abs/2404.02803

There's nothing exceptional about tech policy that makes it harder than any other type of policy requiring deep expertise. If we can do health or nuclear policy, we can do tech policy.

Plus new writings and an upcoming event for policymakers aisnakeoil.com/p/tech-policy-…

w/ Sayash Kapoor

Responses to NTIA on open FMs

1. On marginal risk

hai.stanford.edu/sites/default/…

Led w/ Sayash Kapoor Kevin Klyman Dan Ho Percy Liang Arvind Narayanan

2. On release strategies, thresholds

ias.edu/sites/default/…

Led by Alondra Nelson Christine Custis Dorothy Chou Marc Aidinoff Séb Krier William Isaac

Been saying all this for a while!

aisnakeoil.com/p/ai-safety-is… AI safety is not a model property

aisnakeoil.com/p/model-alignm… Model alignment protects against accidental harms, not intentional ones

aisnakeoil.com/p/licensing-is… Licensing is neither feasible nor effective for addressing AI risks