Tri Dao

@tri_dao

Incoming Asst. Prof @PrincetonCS, Chief Scientist @togethercompute, PhD in machine learning & systems @StanfordAILab.

02-05-2012 07:13:50

428 Tweets

10,1K Followers

283 Following

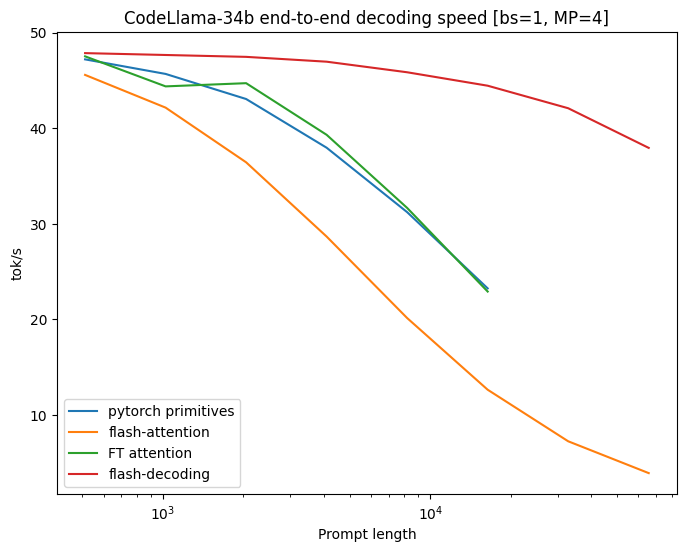

Announcing Flash-Decoding, to make long-context LLM inference up to 8x faster! Great collab with Daniel Haziza, Francisco Massa and Grigory Sizov.

Main idea: load the KV cache in parallel as fast as possible, then separately rescale to combine the results.

1/7